Navigating Software Review: Enforcing Standards and Overcoming Resistance

In the domain of software development, the review process acts as a crucial checkpoint for ensuring code quality, readability, and maintainability. Recently, I found myself tasked with scrutinizing the Python codebase of a fledgling software development team, a journey fraught with complexities in enforcing coding standards and battling resistance while striving for quality assurance amidst mounting project pressure.

Disclaimer: I used ChatGPT to assist with the set up of this article. Starting with some ideas that I had to try to figure out how to do better code reviews.

Photo by Vlada Karpovich: https://www.pexels.com/photo/stressed-woman-between-her-colleagues-7433871/

My background in software development traces back to the era of Emacs terminals, where coding standards were meticulously ingrained in our workflow, essential for maintaining code quality and readability. With the emergence of the Agile movement, luminaries like Uncle Bob Martin (Clean Code) and Martin Fowler (Software Patterns) emphasized clean code and standardization, driven by the painful lessons learned from poorly maintained codebases – a reality that resonates deeply with my own experiences.

Upon immersing myself in this project, it became apparent that chaos reigned supreme. Several sprints in, and concerns about sub-par code quality were surfacing. The absence of established coding standards was glaring, prompting me to initiate a framework to instill order amidst the chaos.

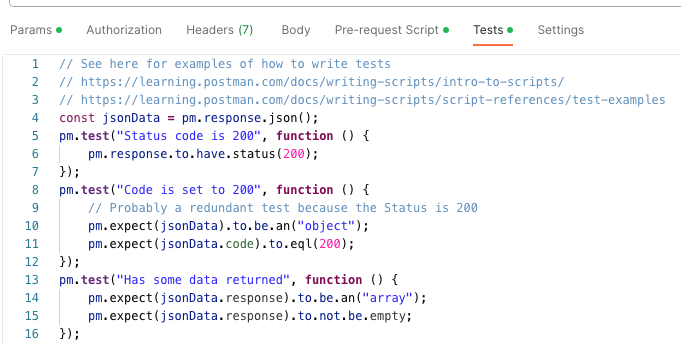

Implementing PEP8, static analysis via prospector, code duplication checks with jscpd, and security screening using bandit formed the pillars of our coding standard framework. Despite garnering initial buy-in from the team through presentations outlining the benefits of these tools, practical implementation proved challenging. Despite investing significant time and effort into documentation, shell scripts, and pre-commit hooks, the tools remained largely unused.

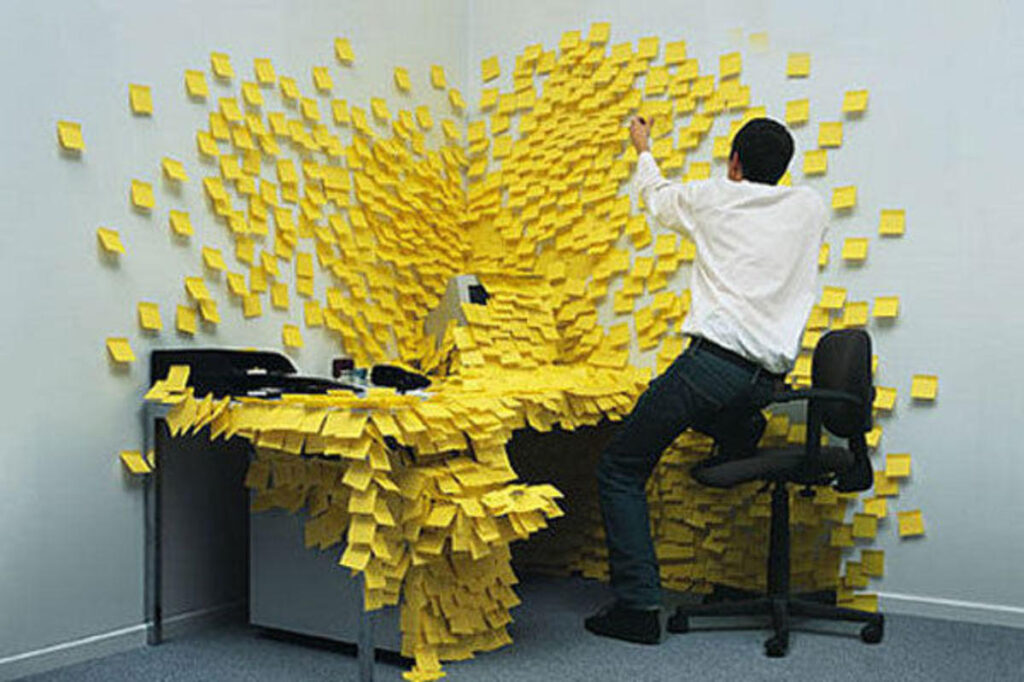

The resistance encountered can be attributed to what I term the “hoarder’s mentality” of code accumulation. With no foundational standards in place from project inception, the code base became a repository of disorganized code. Coupled with inadequate test coverage, the prospect of refactoring became fraught with risk, compounded by the relentless pressure to meet deadlines. The developers prioritized expedient solutions over long-term code quality. Effecting change was “too hard for now” and pushed aside till later.

In confronting this challenge, it’s crucial to recognize the broader implications of neglecting coding standards and quality assurance protocols from the beginning. Beyond the immediate hurdles of managing unruly codebases, the long-term consequences include increased technical debt, diminished maintainability, and compromised scalability.

When commencing a project it is really important to answer the following questions to create the “Constitution” of the project. Once created then all development must adhere to this.

- What repo are we using?

- Git process (Feature-based branching?, GitFlow?).

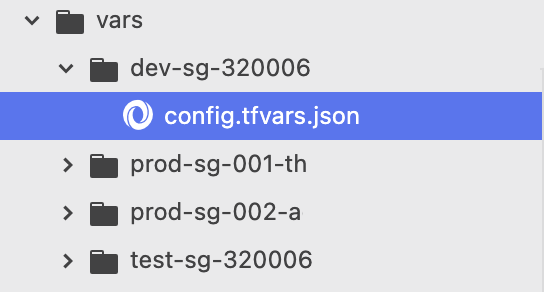

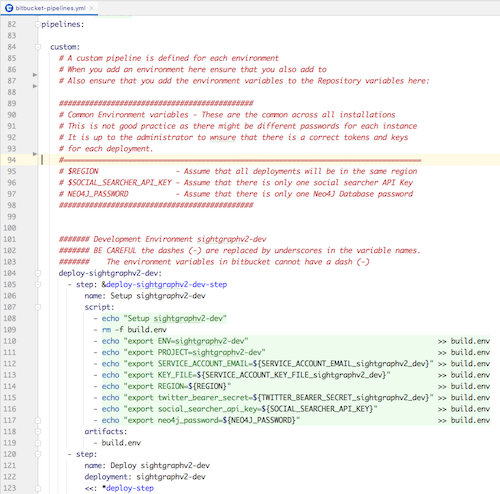

- What is the CI/CD process? ((Bitbucket has pipelines, but can be expensive. Terraform?)

- What are the standards, and how to enforce them? (Prefer automation, and static analysis to subjective reviews)

- How to do testing.

- How to write unit tests. (Unit testing can be challenging in cloud-based projects)

- What IDEs are we using?

- Implementing pre-commit hooks.

- Standards Libraries for REST, DB Access, etc.

- How to write re-usability and libraries.

- Logging

- Notification when a “human is needed”

- REST and API Standards – What does a response look like? (Best Practices for API Design)

- Third-party tools? AI, Postman, Cloud, etc. Be careful because some of the third-party tools get expensive quickly.

- What level of documentation is needed for the project?

- What happens when you don’t follow the above?

- Process for amending the project constitution. Can developers submit changes to static analysis config that accept a coding form that otherwise would be rejected? Add to Standard Libraries. How to do this quickly so that the project does not get bogged down in change control.

If these processes are not in the project from the beginning then it takes huge effort for the team to implement. Especially if the team is trying to meet delivery goals, you will never get the quality reviews part of the day-to-day process.

How to implement this after the project has started?

There is no easy solution to this. You can implement small changes. Maybe you can try to “bring in an expert” to help with reviews. More than likely, that person will be ignored as there are pressures to deliver which outweigh the word of the “expert”. (The words passive-aggressive, and lip-service come to mind) Since the team has momentum, it will be hard to divert them no matter how pure your intentions.

- It must be directive from above, as the momentum for shipping (subpar) solutions must be halted.

- The development of the project will be halted for at least one sprint.

- It is expected that the implemented solution will break.

Most of the time the above is not acceptable. So the only thing that we can do is Boy Scout rule, and try to leave the code a little better than we found it and iteratively the code will improve.